AI is no longer a future concept in asset-intensive industries. It is already influencing how integrity teams collect data, prepare reports, assess risk, and justify decisions. Yet despite the growing pressure to “have an AI strategy,” most organizations are still struggling to turn AI ambition into operational value.

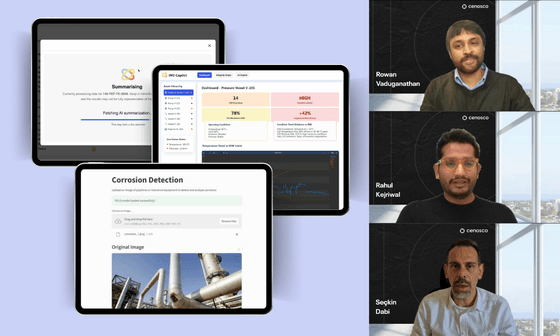

In Cenosco’s recent webinar with Rahul Kejriwal, Rowan Vaduganathan, and Seçkin Dabi, the discussion focused on separating practical reality from AI hype and identifying where AI can genuinely improve integrity outcomes today, as well as what must change before more advanced use cases become viable.

The Current State: High Expectations, Low Operational Relief

Across the oil & gas, chemical, and process industries, integrity teams face a familiar challenge: increasing asset age, rising inspection volumes, and growing expectations without a proportional increase in resources. Much of an engineer’s time is still spent on manual, low-value activities such as data consolidation, report writing, and navigating fragmented systems.

AI has not yet solved this problem at scale. A major reason is that many AI initiatives are introduced from the top down, driven by executive pressure rather than workflow realities. While leadership teams are rightly concerned about falling behind in AI adoption, engineers on the ground often see AI as disconnected from their day-to-day challenges.

The result is a mismatch: high strategic urgency, but limited day-to-day impact.

Where AI Delivers Immediate, Credible Value

Rather than attempting to automate entire integrity processes, the most effective AI use cases today focus on reducing friction in existing workflows.

One of the clearest examples is integrity reporting. Annual and regulatory reports routinely take weeks to complete, not because analysis is complex, but because data is scattered across spreadsheets, inspection reports, folders, CMMS platforms, and integrity management systems. AI can already help by structuring, summarising, and assembling this information into consistent outputs, saving engineers significant time while improving traceability and transparency.

Similarly, AI shows promise in helping engineers interpret large inspection datasets, particularly as advanced inspection techniques generate volumes of data that exceed what a single individual can realistically analyze. In these cases, AI does not replace engineering judgment; rather, it supports pattern recognition, highlights anomalies, and reduces cognitive load.

Why AI Still Fails: Data, Context, and Trust

Despite early successes, AI continues to face structural limitations in asset integrity applications. The most fundamental challenge is data quality. Integrity data is often incomplete, inconsistent, and distributed across multiple systems, like inspection reports, spreadsheets, maintenance databases, and legacy platforms. AI cannot correct these issues on its own. At best, it can surface inconsistencies and gaps more quickly; at worst, it can amplify them by producing confident-looking outputs based on weak foundations.

Context presents an equally significant barrier. Many predictive and detection models struggle to incorporate real-world operational conditions such as process upsets, transient operating states, fouling behavior, or temporary deviations from design intent. Without this context, AI outputs may appear statistically valid but fail to reflect how assets actually behave in service. For integrity professionals, this disconnect undermines confidence and limits practical adoption.

Trust is the final, and often decisive, factor. Asset integrity operates within a highly regulated, high-consequence environment where decisions must be technically defensible and auditable. Engineers need to understand not only what a model is recommending, but why. Black-box AI systems that cannot explain their logic, assumptions, or confidence levels struggle to gain acceptance, regardless of their technical sophistication. Until AI models provide transparency, traceability, and alignment with engineering reasoning, their role will remain supportive rather than authoritative.

The Foundation for Scalable AI: Interoperability and Structure

A consistent insight throughout the discussion was that AI feasibility is established long before any model is deployed. Interoperable systems, structured data, and repeatable workflows are not enhancements layered on top of AI, but they are the conditions that make AI viable in the first place. Without them, even the most advanced algorithms struggle to move beyond isolated experimentation.

Most asset owners operate complex and fragmented IT landscapes for valid operational, regulatory, and historical reasons. Expecting organizations to replace these systems in pursuit of AI is neither realistic nor necessary. The real challenge and opportunity lies in connectivity. When data can flow across inspection tools, integrity systems, maintenance platforms, and enterprise applications, it becomes possible to contextualize and structure information in a way that AI can meaningfully interpret.

This connectivity also enables consistency. Structured data and aligned workflows ensure that AI outputs are based on comparable inputs, improving reliability and reducing uncertainty. In contrast, disconnected systems force AI to operate on partial views of the asset, limiting accuracy and eroding trust among engineers who rely on defensible, auditable insights.

This is where many AI proofs of concept ultimately fail. When interoperability is missing, AI initiatives remain confined to narrow use cases. They cannot scale, nor can they integrate into core integrity processes. Instead of becoming decision-support capabilities, they remain standalone tools. These tools are impressive in isolation. But they are disconnected from how integrity work is actually performed. Therefore, building scalable AI starts not with models, but with the foundational work. This includes connecting systems, structuring data, and aligning workflows.

People Remain Central to Integrity Outcomes

Perhaps the most important takeaway is that AI does not reduce the importance of people; it increases it. Integrity decisions carry long-term safety, environmental, and financial consequences. Human accountability cannot be removed from the loop.

What will change is the required skill set. Engineers who can leverage AI tools effectively will outperform those who cannot. Organizations that invest in training, change management, and user-centric design will see far greater returns than those that treat AI as a purely technical deployment.

AI works best as an accelerator by handling repetitive tasks, surfacing insights, and enabling engineers to focus on judgment, prioritization, and decision-making.

Looking Ahead: Incremental Progress, Not a Big Bang

The future of asset integrity will be increasingly connected, continuous, and data-driven. Advances in sensors, remote inspection tools, robotics, and autonomous data collection are already changing how information is gathered in the field. As these technologies mature, the volume, frequency, and variety of integrity data will continue to increase significantly.

However, greater data availability does not equate to immediate autonomy. In the near term, AI adoption will remain incremental and purpose-driven. The most successful applications will focus on targeted problems, such as report generation, data structuring, anomaly detection, and decision support, where value can be measured and validated. These use cases build confidence, reduce manual effort, and create the operational space needed for more advanced capabilities to emerge.

More ambitious applications, including real-time integrity assessment and AI-assisted decision-making, depend on foundations being established today. Data quality, system interoperability, and workflow alignment are prerequisites and not afterthoughts. Equally important is organizational readiness: engineers must be equipped with the skills, tools, and trust required to work effectively alongside AI.

In 2026, progress will be defined less by dramatic breakthroughs and more by steady, cumulative gains. Organizations that invest early in data, connectivity, and people will be best positioned to turn AI from an experiment into a durable integrity capability.

Key Takeaway

AI is neither a silver bullet nor a passing trend.

For asset integrity teams, success lies in applying AI where it reduces real pain, strengthening the foundations that make AI trustworthy, and keeping engineers at the center of the process.

2026 will not be defined by fully autonomous integrity decisions, but by smarter workflows, better-supported engineers, and AI that delivers practical, defensible value.

¿Listo para una demostración?

¿Está listo para ver IMS Suite en acción? Rellene el siguiente formulario para reservar una demostración.