Most AIM implementation projects do not fail at go-live. They fail months earlier, when the project is framed as a software rollout rather than a data-and-decision-model transformation.

I have seen that pattern too many times to call it bad luck. The software is usually not the issue. The engineers are usually not the issue either. The issue is that too many Asset Integrity Management projects begin with the target platform and only later confront the reality of the source landscape.

That is backward. In real implementation work, the hard part is rarely the screen. The hard part is working out how an operator actually identifies assets, where inspection histories really live, which hierarchy is treated as authoritative by which team, and whether two records with different names are in fact the same piece of equipment.

For years, I led implementation programs across different industries and different starting points. The lesson is simple: AIM implementations fail for understandable reasons. They fail when complexity is hidden, when validation starts too late, and when migration is treated as a file-moving exercise rather than an engineering data transformation.

The difference between a painful implementation and a controlled one is not optimism. It is a method.

The Three Implementation Realities I Know Well

AIM Implementation in Greenfield Projects: Fast Does Not Mean Simple

The cleanest project is a genuine greenfield implementation: no legacy integrity software, no local patchwork of half-governed databases, no debate over whether the spreadsheet or the PDF report is the real source of truth. These projects move faster because the work involves design, configuration, governance, and training. They do not move faster because they are simple. Even Greenfield requires decisions about structure, ownership, roles, workflows, and the level of standardization the client wants from day one.

AIM Implementation in Brownfield Environments Without a System of Record

This is where implementation becomes real implementation work. The hierarchy may sit in SAP, Maximo, etc., and wall thickness measurements may be spread across Excel files and PDF reports. Condition histories for visual inspections may live in documents. Some schedules are managed in SAP, others in Excel. The same relief valve may be named three different ways depending on who created the source. That is not an exception. That is the normal starting point in brownfield AIM projects.

AIM Implementation During Migration From Legacy Integrity Software

The third scenario is migration from a different software environment. Sometimes it is one legacy application. More often, it is a combination of tools accumulated over years: older Cenosco systems such as RRM, S-RBI, and SIFpro; competitor environments such as Ultrapipe, Meridium/GE APM, PCMS, Visions, Sphera, exSILentia, DNV Synergi, Rosen, Credo; or home-grown combinations held together with spreadsheets and local logic. After more than twenty years of migrations, the surprise factor is low. The real challenge is not export and import. It preserves engineering intent while simplifying the application landscape rather than copying its chaos into a newer interface.

Example: If SAP shows a hierarchy, Excel holds the thickness history, PDF reports hold the condition notes, and schedules are split between SAP and local files, you do not have a migration problem yet. You have an interpretation problem. Until names, structure, and logic are reconciled, loading data only moves ambiguity into a new system.

Where AIM Implementation Actually Fails

Common failure patterns teams repeat:

Scoping Issues

They are scoped like software projects instead of operating-model projects. If the early conversation focuses on modules, screens, and timelines before anyone has produced a credible data inventory, the project is already exposed. AIM implementation is never only about installing software. It is about aligning structure, naming, history, engineering logic, and decision ownership so that the software reflects how the asset base should be managed.

The source landscape is underestimated. I still hear versions of the same sentence: “Most of the data is in SAP.” Usually, that means the hierarchy is in SAP. It does not mean corrosion histories, wall thickness series, visual findings, schedules, and local engineering decisions are also in SAP. When teams discover too late that the real history lives elsewhere, the schedule starts slipping for a very predictable reason.

Timing & Responsibility Problems

Validation starts too late. If the project waits until UAT to discover that mappings are wrong, that schedules were duplicated, or that histories cannot be tied to the right equipment, it is no longer validating. It is recovering. The practical rule is simple: mapping assumptions and business logic need to be exposed early, shown back to the client, and confirmed before large volumes of data are pushed through the pipeline.

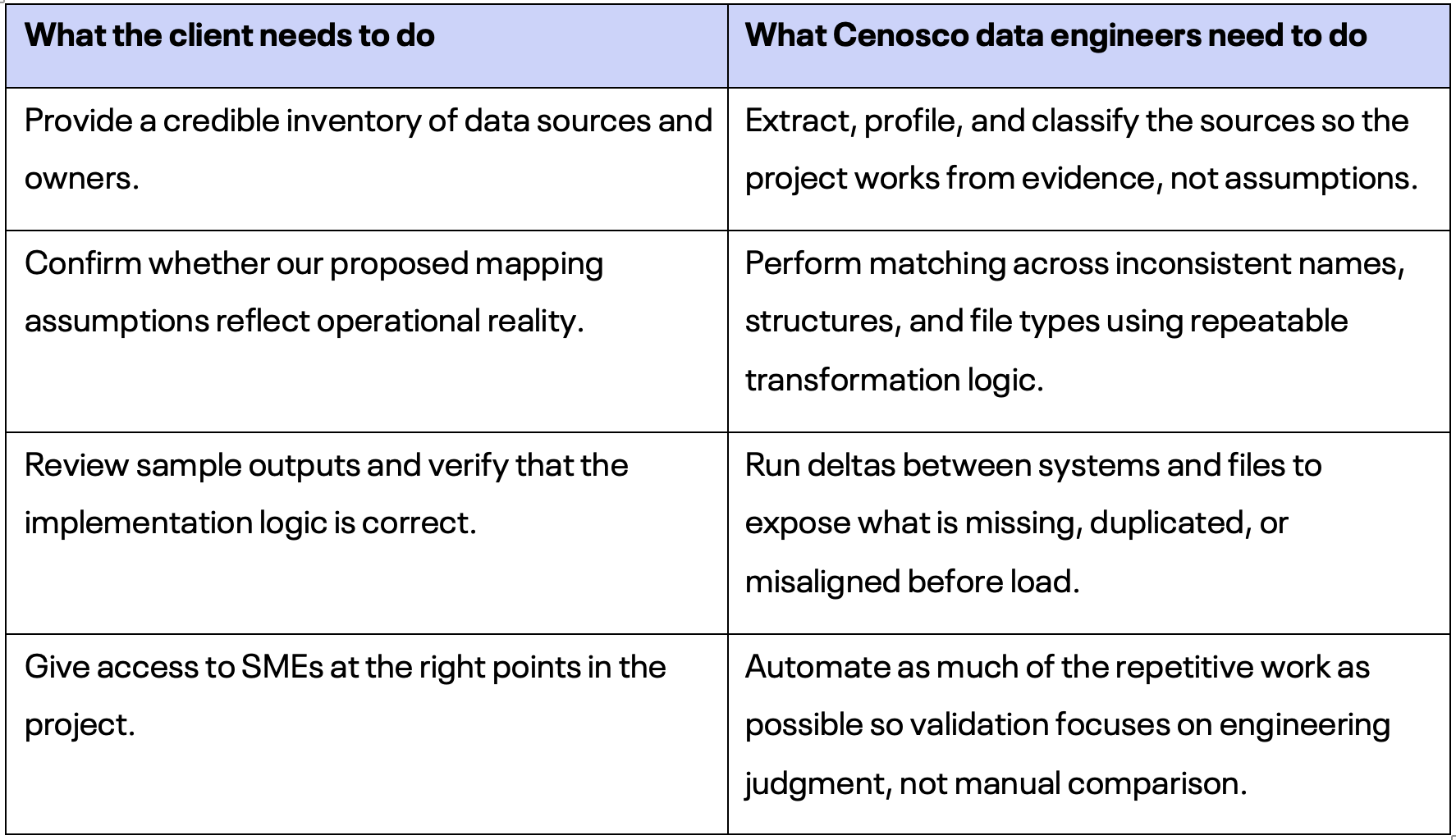

Responsibility is split badly. The client absolutely matters, but not in the way many failed projects assume. Clients are essential for three things: providing the data inventory, confirming and validating mapping assumptions, and verifying the implementation logic. They should not be expected to do the heavy transformation work themselves. That is where our data engineers come in. They handle matching, reconciliation, automation, and the unglamorous technical work required to migrate data from every file type and tool you can imagine.

Data Quality & Rollout Errors

Naming conventions are trusted more than they deserve. Brownfield data is full of aliases, local abbreviations, truncated tags, and naming conventions that made sense to one team at one moment in time. If that is not confronted early, traceability suffers immediately. In AIM, poor identification is not just bad master data. It undermines confidence in every inspection and maintenance decision attached to that asset.

Legacy replacement is treated as a lift-and-shift exercise. A new platform does not fix old logic by default. If the old system contains duplicate histories, inconsistent hierarchies, or discipline-specific workarounds, blindly carrying all of that forward only modernizes the interface. It does not modernize the operating model. Replacement has to include rationalization.

The rollout tries to solve everything at once. The most resilient projects usually start with the highest-impact scope, not with the broadest possible one. They prove the data logic, the integration pattern, and the user workflow where value is clearest, then scale. Big-bang ambition is often just a polite form of avoidable risk.

What Clients Tell us Before an AIM Implementation Project Goes Sideways

“Our data is not ready yet.” That is rarely a reason to stop. It is a reason to classify the starting point honestly. Very few clients begin with clean data. The real question is whether the sources are visible and whether the project is willing to work through them methodically.

“We can validate that later.” Usually not safely. The later mapping and logic are validated, the more expensive every correction becomes.

“We only need migration support.” Often what the project really needs is logic harmonization, naming reconciliation, and delta analysis before migration even starts.

“Every site does it a little differently.” Exactly. That is why enterprise implementations need explicit standardization decisions, not hidden local assumptions.

Anecdotes From the Field – The Data Is Never as Clean as the Sample

One of the fastest ways to derail an AIM implementation is to assume that source data is basically correct.

It almost never is.

Not because clients are careless. Not because their engineers do not know their assets. But because years of operational history leave scars:

- workarounds

- manual overrides

- contractor files

- partial exports

- naming mismatches

- old rules nobody remembers

- data that made sense in one system but not in the next

That is why I have always believed AIM implementation success depends less on moving data and more on challenging it before it moves.

A few projects made that lesson impossible to ignore.

Upstream SAP Export

On one upstream asset, we were reviewing an SAP Maintenance Plan export and noticed something that should have been impossible: around 20% of the maintenance plans showed the next inspection date in the 1970s. Not one or two records. Not a corner case. The majority. On paper, that kind of issue looks absurd. In a live AIM implementation, it is dangerous. If you load that blindly, you are not just importing bad dates. You are importing false confidence into future planning, reporting, and inspection logic.

That case is exactly why we introduced verification logic around inspection scheduling instead of trusting raw export fields at face value. We started checking whether the next inspection date actually made sense when compared against the last inspection date plus the applicable interval. In other words, not just “what does the source say?” but “does the source behave like an inspection program should behave?” That is the difference between migration and engineering. Migration copies fields. Engineering tests whether those fields tell a credible story.

LNG Client’s 777 Excel Files

Another case came from an LNG client doing RCM assessments for roughly 100,000 equipment items. The delivery package arrived in 777 Excel files. Every single file had six sheets: RCM hierarchy, RCM systems, failure modes, RCM criticality matrix, tasks, and MEI calls. On its own, that is already a serious implementation challenge. The hierarchy did not fully match the SAP export, which is common enough. But after a while, the numbers stopped reconciling in ways that did not look like normal mapping noise. Relationships were breaking. Counts were off. Logic chains that should have tied together cleanly were not adding up.

This is where AIM implementation teams get tested. You either assume the source is messy and keep pushing, or you stop and investigate properly.

Our internal validation logic kept flagging anomalies, and eventually we found the issue. In one of those 777 files, in three cells where the contractor should have entered text and numbers, they had pasted a screenshot instead. An actual screenshot. Not a value. Not a comment. A screenshot inside the cell area. On one level, it is funny. On another, it is the perfect example of why large-scale migrations fail when people imagine the problem is “just Excel.” Excel is not a format. It is an ecosystem of human behavior. Once you have enough files, enough contributors, and enough deadlines, strange things will happen. The point is not to be surprised by that. The point is to build a process that catches it before it contaminates the target system.

Wall Thickness Migration (1M+ Data Points)

Then there was the wall thickness migration from an older legacy tool into IMS. This one was large even by industry standards: more than a million data points from two major refiners, covering over 25 years of history. When you first hear that, it sounds like a dream dataset. Long history, dense measurements, strong basis for analysis. But when we started examining the trends, some thickness readings were behaving in ways that made no physical sense. Values were going down, then up, then down again. Components appeared to be thickening over time, which is not a corrosion mechanism anybody wants to discover for the first time in a dataset.

Of course, there can be valid reasons for that behavior. A component may have been renewed. A location may have changed. A measurement point may have been redefined. Data may have been corrected. But those are exactly the kinds of events that need to be understood, not silently imported.

So we added a validation layer before import. The logic flagged odd wall thickness behavior and highlighted records that might indicate replacements, corrections, renewals, or other discontinuities in the history. Yes, IMS itself can flag inconsistencies once the data is inside the system. But from an implementation perspective, it is always better to prevent known issues one step earlier, while the client can still confirm what actually happened. That changes the conversation. Instead of discovering suspicious behavior after migration and debating whether the new system is wrong, you bring the client a structured question before import: this trend does not behave like continuous degradation, can you confirm whether there was a renewal or data correction here?

That is a much better implementation moment. It is technical, but it is also collaborative. And that matters.

The Real Legacy Replacement Challenge

Because this is what clients often underestimate when they start a legacy replacement project: the hardest work is not loading tables. It is separating operational truth from operational noise.

The upstream case taught us not to trust schedule fields without schedule logic. The LNG case taught us that large Excel-based programs need industrial-grade validation, because scale amplifies even ridiculous human errors. The wall thickness case taught us that long historical datasets are valuable only if you respect their discontinuities.

None of these examples are exotic. That is the point.

This is a normal brownfield reality.

What “Easy Implementation” Really Means

And this is also why “easy implementation” should never mean simplistic implementation. Easy, in a credible sense, means the hard checks have already been operationalized.

It means:

- the team is not manually hunting for impossible dates in SAP exports

- strange Excel behavior gets detected before it becomes a migration defect

- historical thickness data is challenged for engineering plausibility before it enters the target model

- implementation is supported by repeatable verification logic, not heroics

That is how you replace legacy integrity systems without repeating the same mistakes.

Not by assuming the data is clean. By assuming it isn’t.

The Work Split That Keeps Implementation Moving

The healthiest projects are explicit about what the client must own and what Cenosco must absorb. When that split is vague, frustration starts early.

The Internal Engine Behind “Easy AIM Implementation”

Easy implementation is often misunderstood. It does not mean the client has no work to do, or that brownfield complexity disappears. It means the complexity is handled by design instead of being left to improvisation.

Internally, that only became credible because we systemized the implementation layer. We stopped treating hard migration tasks as one-off heroics and turned them into repeatable methods, utilities, and knowledge assets.

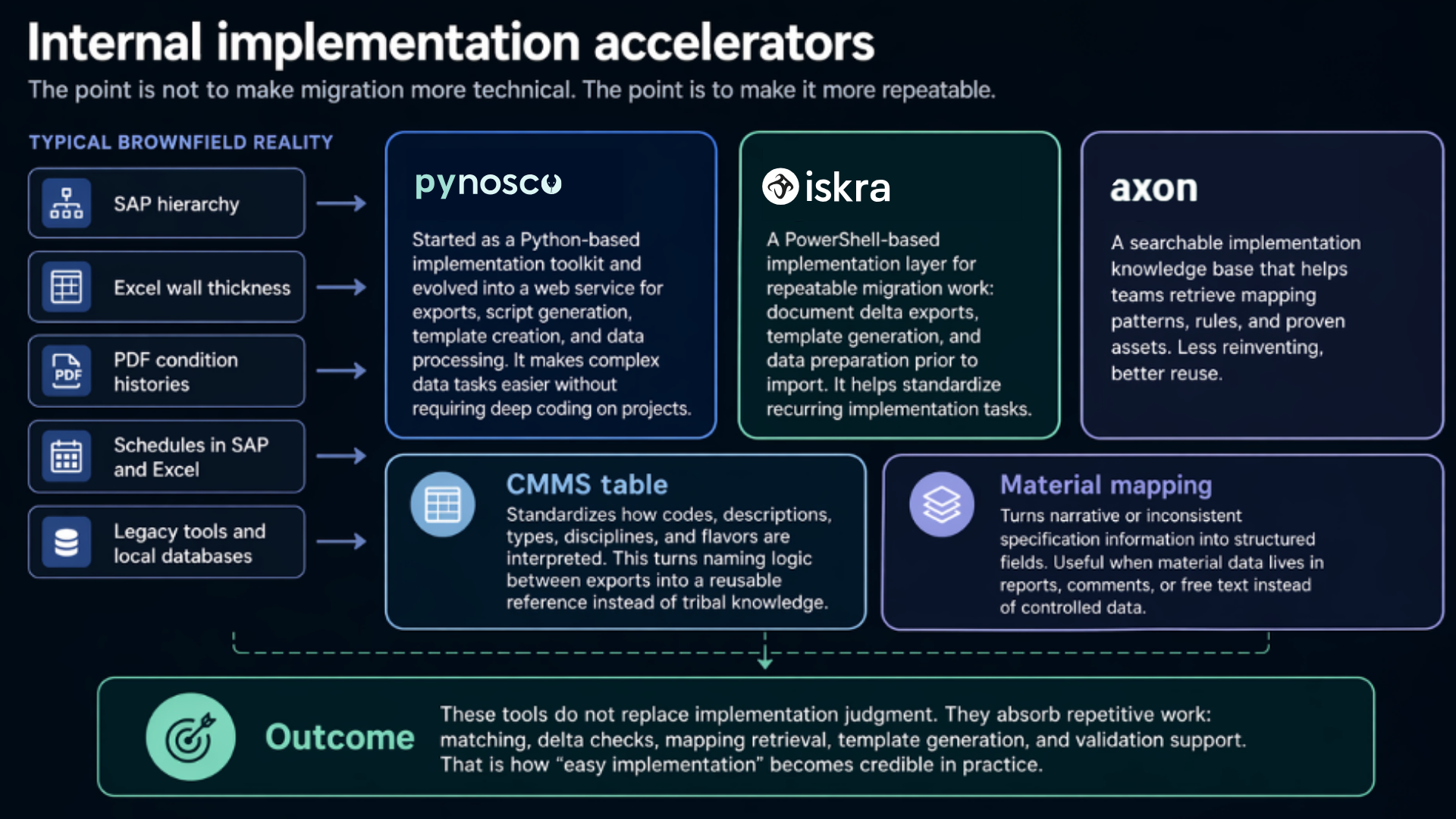

Figure 1. How internal implementation tools turn fragmented source data into repeatable migration work.

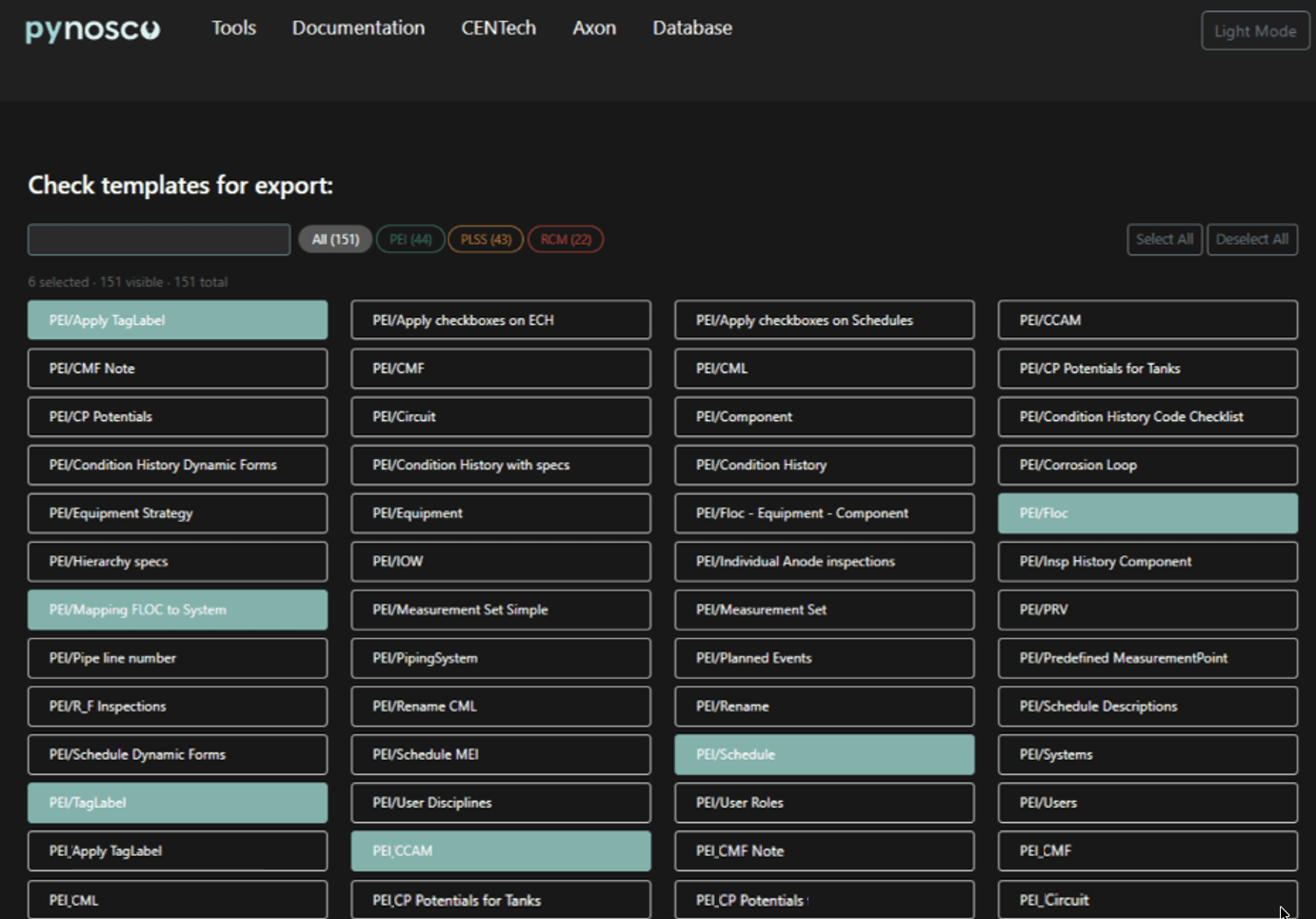

Pynosco: From Python to Web Service

Pynosco started life as a Python-based toolkit used by the implementation team for command execution and data processing. The important point is not the language. The important point is what it did: it turned recurring migration pain into something structured. Over time, the useful routines became easier to access through a web service so people did not need Python knowledge just to run practical checks, generate scripts, or process tables.

Iskra: PowerShell for Repeatable Tasks

Iskra represents the implementation-hardened version of that thinking. It shifted key activities into Windows PowerShell and terminal-based commands so repeatable tasks could be run without Python expertise. One very clear example is delta generation: compare SAP FLOC and equipment exports with an implementation profile, generate structured delta templates, and make the differences visible early instead of discovering them during testing.

Axon: The Knowledge Layer

Axon plays a different but equally important role. It is the knowledge layer behind AIM implementation: a database used for easier retrieval of the information, mappings, and reusable reference points that teams need during migration. In practice, that means less reinvention and a shorter path from question to answer.

Practical AIM Implementation Helpers

Then there are the practical helpers that remove friction from detail work. The CMMS table makes mapping logic explicit across codes, descriptions, FLOC types, groups, disciplines, modules, and flavors. Material-mapping workflows help convert narrative, messy, or semi-structured specification information into fields the target system can actually use. None of this is decorative. This is the quiet work that makes projects feel controlled.

Replacing Legacy Systems without Repeating Legacy Mistakes

Legacy replacement should reduce complexity, not relocate it. That means deciding what deserves to be migrated, what deserves to be standardized, and what deserves to be left behind. Not every workaround in the old environment deserves a future in the new one.

In practice, that starts with uncomfortable but necessary questions:

- Which hierarchy should become authoritative?

- Which naming rules will survive?

- Which data is critical enough to reconcile manually if automation cannot settle it?

- Which sources are historical reference only, and which must become operational data?

These are implementation questions, not IT afterthoughts.

This is also why I do not buy the idea that successful replacement is mainly about speed. Speed matters, but trust matters more. If engineers do not trust the migrated context around an asset, they will keep shadow records, local spreadsheets, and private logic. At that point, the legacy landscape never really went away.

The better target is controlled confidence: the client sees the data inventory, understands the mapping assumptions, validates the logic, and sees the deltas early enough to correct them. That is what allows adoption to follow go-live instead of resisting it.

Conclusion

Most AIM implementations fail for very ordinary reasons. The project starts too high in the stack. The source data is more fragmented than the early meetings admit. Validation is postponed. Responsibility is split badly. Legacy logic is copied instead of challenged.

The answer is not more theatre around digital transformation. It is a disciplined implementation. Classify the starting point correctly. Inventory the data. Reconcile naming. Run deltas early. Let the client validate the assumptions and logic. Let the data engineers do the heavy lifting. Use internal tools to make repeatable work faster and less mysterious.

That is what ‘easy implementation’ should mean in our world. Not simplistic. Not effortless. Systemized.

Easy does not mean the data is simple. It means the complexity has been absorbed by the method.

Готовы к демонстрации?

Вы готовы увидеть IMS Suite в действии? Заполните форму ниже, чтобы заказать демонстрацию!

Andrea Mišur Head of Revenue Growth

Andrea is the Head of Revenue Growth at Cenosco, where she leverages her background in science and engineering to drive technology-driven strategic planning and business execution.